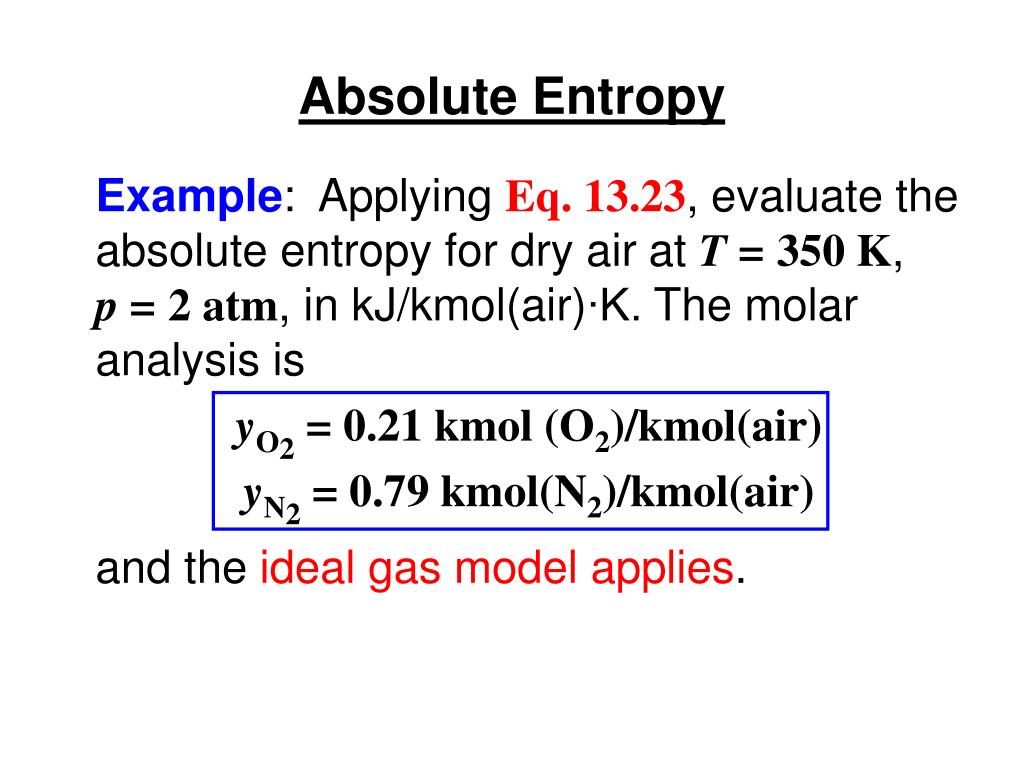

This order makes qualitative sense based on the kinds and extents of motion available to atoms and molecules in the three phases. Given a discrete random variable, which takes values in the alphabet and is distributed according to : where denotes the sum over the variable's possible values. The entropy appearing in equations 12.9.9 and 12.9.11 is surely the absolute entropy, and we cannot calculate this unless we know the entropy at T 0 K. K), whereas S° for water vapor is 188.8 J/(mol In information theory, the entropy of a random variable is the average level of 'information', 'surprise', or 'uncertainty' inherent to the variable's possible outcomes.Solve the equation (specific heat at constant pressure Cp 4.1818 kJ/Kkg). Define final and initial temperature: Tf 20 ☌, Ti 100 ☌. For instance, S° for liquid water is 70.0 J/(mol We will use the change in entropy formula: s Cp × ln (Tf / Ti), where Tf and Ti indicate the final and the initial temperature, respectively. It also covers the labor required to convert energy from one form to another.\), for substances with approximately the same molar mass and number of atoms, S° values fall in the order S°(gas) > S°(liquid) > S°(solid). Thermodynamics is the study of the energy changes that occur as a result of temperature and heat variations. For a signal, entropy is defined as follows: (4.14) where is the probability of obtaining the value. The higher the Shannon entropy, the bigger the information is given by a new value in the process. Use the change in entropy formula for reactions: Sreaction Sproducts - Sreactants.

This modification in the entropy formula gives an indication of a process’s or a chemical reaction’s spontaneity.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed